Coqui's Fourteenth Mondays Newsletter

- Pẹlẹ o 👋🐸

- African Language TTS Models

- African Languages Dataset

- 🐸TTS v0.6.2

- Community Spotlight: LordApplesause and p0p4k

Pẹlẹ o 👋🐸

By Kelly Davis

Serving up another cup of Coqui coffee goodness this month, brought to you from the birthplace of coffee, Africa!

This month we’re releasing six new text-to-speech models, and they’re all for African languages. Hausa, Yoruba, Ewe, Lingala, Asante Twi, and let us not forget Akuapem Twi. We’ve got you covered! Also, they’re all high quality models. Take a listen to the Yoruba model in action!

Orin si eti mi! Also, we’re not only releasing the models, but also the data we use to train these models, all under an open source license! (Don’t say we never did anything for you!)

In addition, this month we’ve a new 🐸TTS release, 🐸TTS v0.6.2, with new models and lots of little bug fixes and enhancements. Also, we are bringing back the community spotlight, this time we’re shining it on @LordApplesause and the ever helpful @p0p4k. A tip of the hat to you!

Gbadun iwe iroyin naa!🐸

African Language TTS Models

By Josh Meyer

If you already starred the TTS repo on Github, you probably noticed the v0.6.2 release of TTS…but maybe you didn’t see the BIG news buried in the release notes 😎 We recently released six (6) new state-of-the-art TTS models for six languages spoken on the African continent!

The six new languages and models are:

| Model | Language | Example Greeting |

|---|---|---|

hau/openbible/vits | Hausa | Salama alaikum! |

yor/openbible/vits | Yoruba | Ẹ n lẹ! |

ewe/openbible/vits | Ewe | Nkekea nenyo! |

lin/openbible/vits | Lingala | Boyei bolamu! |

tw_asante/openbible/vits | Asante Twi | Me ma wo akye! |

tw_akuapem/openbible/vits | Akuapem Twi | Mpɔ mu te sεn? |

Go check out the models for yourself on our official Huggingface Spaces page!

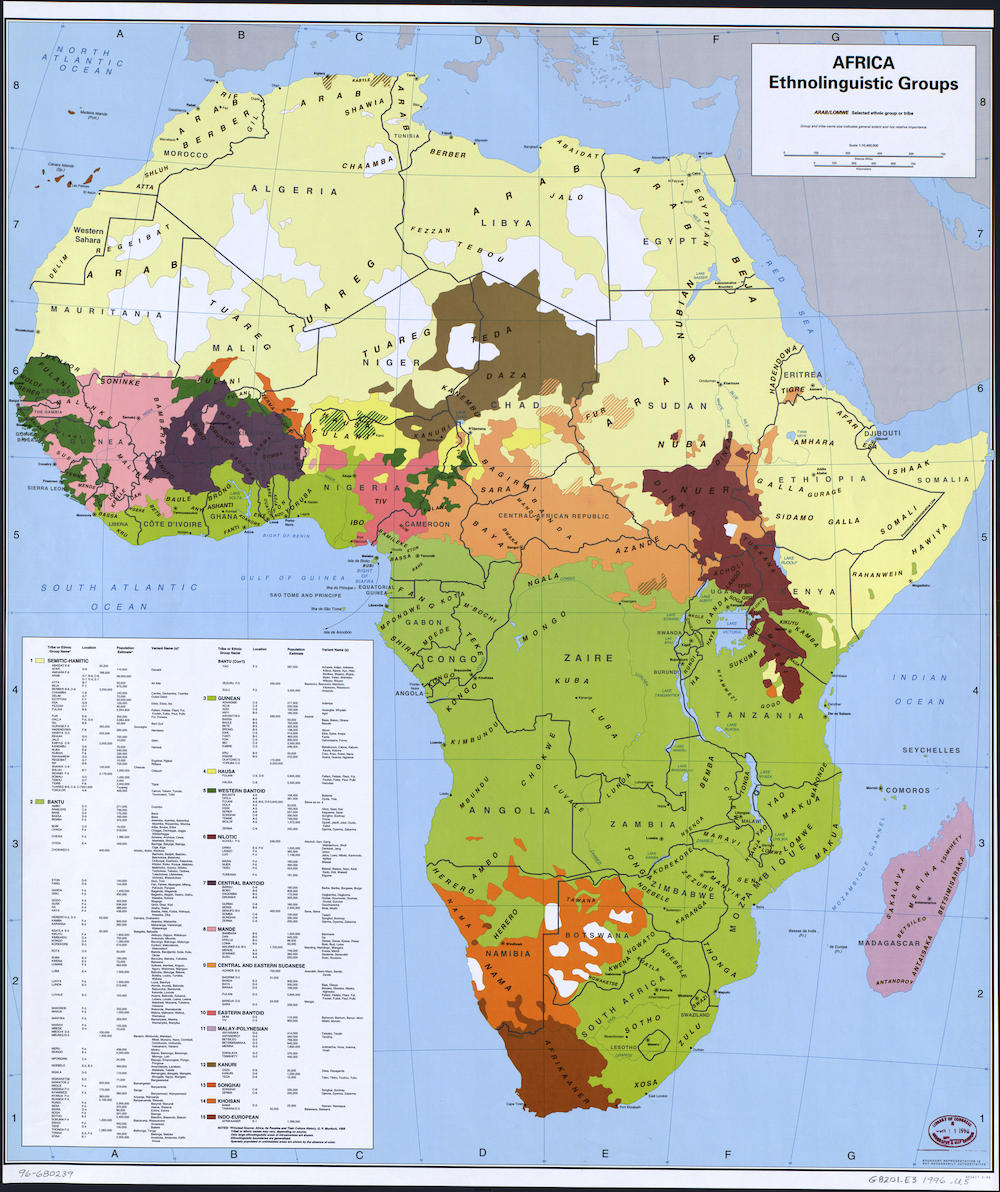

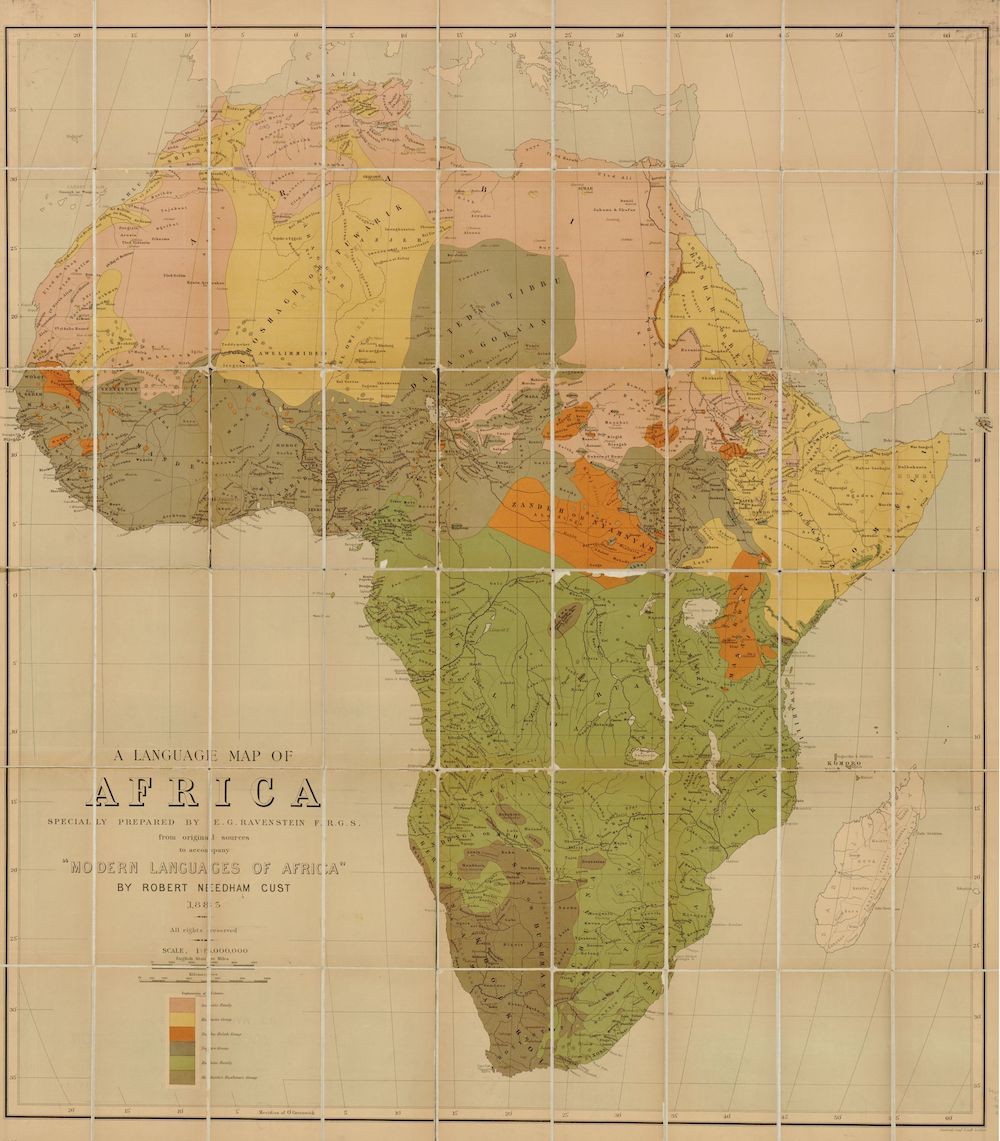

These languages are collectively spoken by millions and millions of people everyday!

These models are special for a few reasons. They are:

- ✅Result of our first ever collaboration with the excellent Masakhane group

- ✅First ever African languages released in 🐸TTS

- ✅New State-of-the-Art synthetic voices in all six languages

- ✅Released under a commercial friendly Creative Common license

The successful training of these models further goes to show how linguistically flexible 🐸TTS really is. We used an efficient training trick to get these models trained fast: we taught one of our English models to speak each of these languages. We used a kind of “cross-lingual transfer-learning” to “fine-tune” an American English woman’s voice to sound like a man speaking Yoruba (for example) and it saved us a huge amount of time. Another example of 🐸TTS learning a new language fast!

Even if you don’t speak these languages, you can appreciate the quality of the TTS models. Here’s the Yoruba model reading a paragraph on food:

African Languages Dataset

By Josh Meyer

In the above section we told you about the new high-quality African models we just released with 🐸TTS. What you don’t know yet is that we’ve been collaborating with excellent researchers from Africa and all over the world to build the dataset used to train those new voices.

As you might know, data is the life-blood of deep learning. These shockingly realistic synthetic voices wouldn’t have been possible without a shockingly high-quality dataset. Collaborating with Masakhane and researchers from Carnegie Mellon, Johns Hopkins, Saarland University, and elsewhere, we created that dataset, and we’re releasing it to the world under a commercial friendly, Creative Commons license. All the juicy details on how we created the dataset and trained the models are slated to come out at INTERSPEECH 2022 (a top-tier research conference for speech technologies). Until then, you can read more on our blogpost!

🐸TTS v0.6.2

By Eren Gölge

This minor release has significant bug fixes and improvements. Most importantly, it is accompanied by 6 new TTS models in various African languages that you can try right now under 🐸TTS.

This is also our first release with our new team members @Coqui; Edresson Cassanova, Julian Weber, and Logan Harth. We welcome them once again, and cheers for all the success we’ll have together 😄.

You can also check the release notes to learn more about v0.6.2

Community Spotlight: LordApplesause and p0p4k

By Eren Gölge

This month we wanted to shine our spotlight on @LordApplesause and @p0p4k.

@LordApplesause created a great blog post that gets you quickly up to speed on using 🐸TTS to train your own text-to-speech model using the Colab.

@p0p4k has been our most active members of the 🐸TTS discussions forum. Beyond the forum, he’s also expanding everyone’s horizons with reviews of two important papers Conditional Variational Autoencoder with Adversarial Learning for End-to-End Text-to-Speech and Glow-TTS: A Generative Flow for Text-to-Speech via Monotonic Alignment Search); reviews that were a pleasure to read.

Let us know if you’ve done something cool😎 with our open-source projects and you could get a “shout out” here along with some of our cool😎 swag.